Recent advancements in large language models have thrust the topics of "AI ethics" and "AI safety" into the forefront of the discourse. On one side, there is a supposed promise of a positive future where AI enables us to achieve vaguely "good" outcomes. On the other side, there is the specter of doom, where AI mistakes could lead to extinction unless we "align AI" and achieve a collection of vaguely "good" things. Both positions rely on certain "positive" visions of the future. However, these visions often encompass confused, impossible, undesirable, or downright dystopian elements. Among these ideas promoted by both doomsayers and proponents of rapid AI development are uploads, digital minds, merging with AI, cyborgization, and other bad ideas.

Doomers, in particular, display a disingenuous approach by attempting to halt AI progress under the guise of "preventing global catastrophe" until AI aligns with their specific vision and nothing else. Given that their vision of uploads is impossible, this is a problem. I have made an attempt to address this issue on LessWrong, which can be found here. Unfortunately, neither side is willing to acknowledge the fact that the majority of people are not interested in these ideas, nor do they allow space for opposing viewpoints to be heard.

There are, of course, differences among these factions. Doomers tend to argue for total centralization in order to "regulate" large training runs and also advocate for centralization within a future AI singleton. On the other hand, some accelerationists lean towards decentralized solutions, where the future is determined through unfettered competition. In 1999, Kurzweil predicted the emergence of a one-world government by 2020 and viewed it as a positive development. Fortunately, he was mistaken in this regard. Futurists attempt to introduce "wealth redistribution" schemes such as Universal Basic Income (UBI) by making promises or threats of complete job elimination.

Ironically, even in science fiction, including those with less optimistic narratives, we often find visions that are more realistic and desirable than those put forth by many self-proclaimed "futurists."

What we truly need is a better vision grounded in genuine humanism. This vision should be crafted by individuals who possess a pro-natalist perspective and a nuanced understanding of evolution—people who have a genuine stake in shaping the future, rather than being caught up in delusional signaling spirals.

Within the futurist space, there are indeed valuable ideas. Balaji has introduced the concept of "the network state". Aubrey De Grey has made significant strides toward enhancing life expectancy. Aubrey's anti-aging research alone will not solve death, you also need to solve a number of “easily preventable” diseases and death. Similarly, Balaji's vision of the network state may require further development to become a reality, but it raises crucial questions about the future of governance and state-like structures.

So, what constitutes a compelling vision for the future, let's say, in the next 250 years?

If I had to put it into one sentence, it is

Every adult on Planet Earth should be healthy and wealthy enough to visit the Moon once.

It’s not the entire vision, merely a specific touch-point of what capacity I expect civilization to reach. We want to push the health of the average person to a level similar to today's astronauts and do so in a full-earth sustainable way that can persist for centuries. Life expectancy for the average person will increase. The human population will increase as well as pro-natalism will take its rightful place as a part of the culture.

New habitation will need to be explored. We will need to make new habitats and use existing ones more effectively. We can settle the ocean both above and below the surface.

Cities need to be revamped to have both true freedoms of assembly allowing those who wish to live together to do so and energy–efficient ways to move around.

Cities will need to be built according to the varied desires of each community, not the cookie-cutter car-centric ideal spread too far around the world.

Pollution of air, water, and ground is a big issue. We have already seen enormous endocrine disruption in both people and animals. Existing pollution will need to be removed. All future industrial production will need to be revamped to not have any side effects. Existing plastic will need to be slowly phased out, although once again, this should not come from force but rather a more natural understanding.

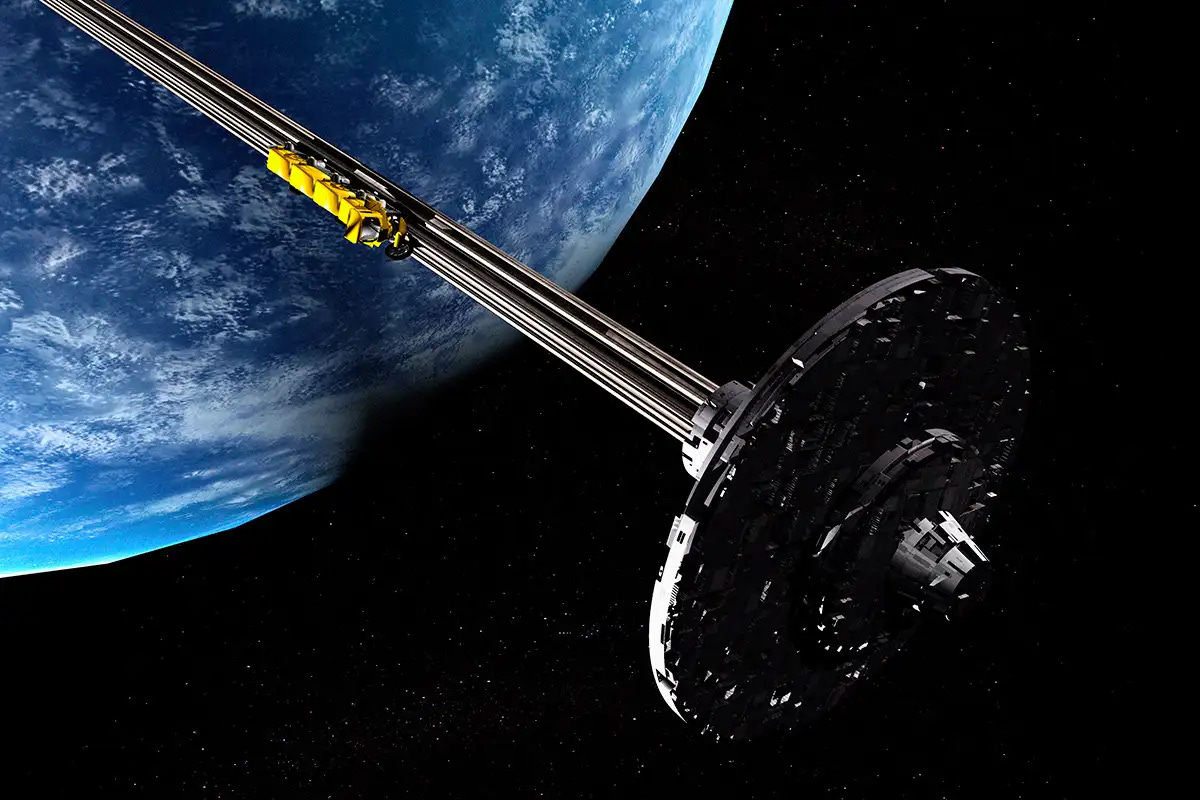

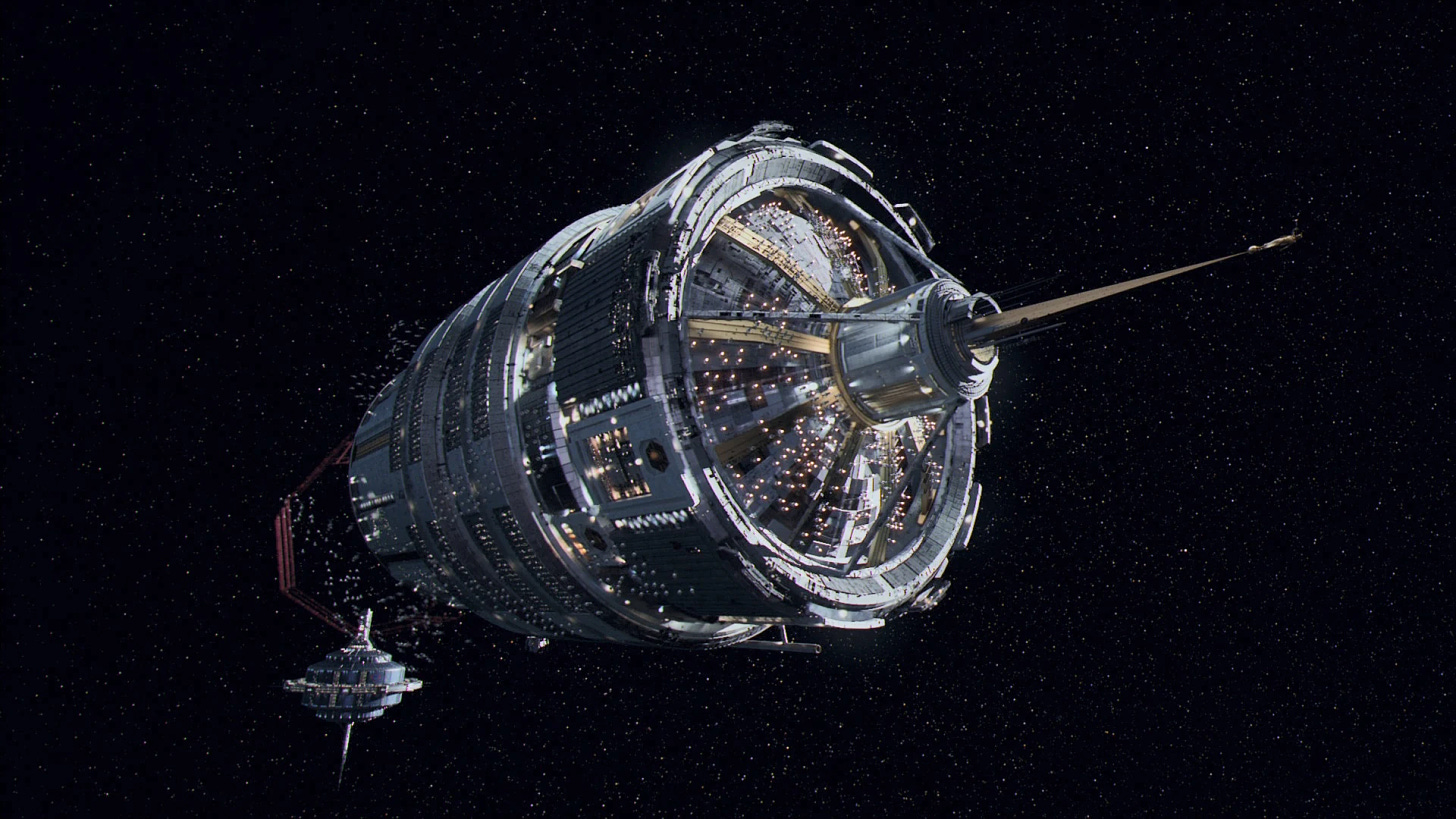

After the low-hanging fruits of ocean habitats and more efficient cities have been explored, we will go into space. We will need either advanced electromagnetic launch platforms, space elevators or sophisticated reusable engines, or synthetic fuel which means that space flight can be used sustainably without limited fossil fuel or fusion fuel consumption, although these will be necessary temporarily.

Mars should be terraformed.

Once it comes time to leave our planet, we will travel to the next system. The journey will be long, but we will be healthy enough for it.

These changes require a better understanding of what makes a good society and better metrics of reality. GDP has become far too polluted with financial machinations and useless healthcare bills to be a useful metric. Life expectancy or quality-adjusted life expectancy is a much better metric, however, a proper understanding of it is required to avoid becoming fake. In general, we must aim to eliminate all unnecessary deaths, including deaths from easily preventable diseases like diabetes, including deaths from car accidents, and crime.

Changes

We are going to completely revamp many things. Most media has no incentive to provide positive information. It needs to go. Most “science” is not much better. It has moved away from the building of correct, simple, and useful world models and has become a tool of authoritarian control. Universities may remain in some form, however, the functions they provide – research, elite signaling, networking, and education will likely need to be separated into separate institutions or looked at in light of better metrics.

Money will need to be redone to give proper control over the creation of it to the users of it. This can just mean Bitcoin–like structures of fixed supply, however, this will likely mean something more sophisticated. Groups of individuals should be able to create their own internal currency coordination mechanisms that only get diluted with specific software-enforced mechanisms. Regardless of the method, the era of unlimited printing has come to an end. Chasing “investments” to preserve one’s savings from inflation cannot be a massive drain on people’s mental resources.

Different government structures will arise, some more centralized than today, some less.

However, the general theme is that we need more coordination and better coordination between people whether through single-person leadership or market signals. Centralization and decentralization will ebb and flow back and forth based on the techno-social forces somewhat beyond the current scope.

AI

AI and software in general will help us a lot in this, however, there are going to be problems that AI can’t solve. Technology as a whole is not meant to remove all labor, but rather reduce the workweek while making things cheaper. In the future, you can imagine most people are still working 4–hour work weeks and getting paid enough for this work to support themselves with ample time for leisure. We need to come back to the positive visions of the mid-20th century: a manual job should be a respected profession that has enough money for life. Life generally gets better simply due to technological innovation “lifting all boats.”

Jobs will still exist since people will still want to trade skills and time with each other. However, many jobs can become more varied and complex as AI removed the drudging parts of them. This is already happening with coding.

My general vision of AI is that it should be a tool, nothing more. You can occasionally augment your intelligence with AI the same way that a worker augments his strength by climbing into a giant tractor. However, this is a temporary enhancement and not some permanent installation. Making self-driving giant tractors is not a great idea.

Similarly, one will have to be careful in understanding how AI-created signals impact socio-economic signaling structure, as to avoid polluting people’s minds with automated spam.

AI will solve the problem of “making a plan to remove pollutants from the water” or even “how to supply healthy animal protein to every person in the Solar System.”

AI will not solve “population ethics,” “what government is best” or “is genetic engineering ok.” AI will not re-ground science in the reality of phenomenology. Only a very wise human can do this and this is ok.

There is probably a limit to how intelligent it is safe to make an AI, especially if it is paired with a physical robot. However, the amount of actual useful work you can extract from it before that is immense.

To use AIs as tools effectively and safely, at least some people need to understand them. Lots of software algorithms don’t need to have AI nature. Many existing AIs will need to be rewritten to be provably correct algorithms for specific tasks, whether it is space-ship navigation or proper extraction of the likely most correct information from a linguistic corpus.

The bottlenecks to proper usage of AI are more social than technical today. Even if AI can become more truthful, there are too many stakeholders who don’t want it to be truthful or can’t recognize what the shape of “truth” even looks like.

We can and will use AI to solve technical challenges and speed up software creation, but we cannot rely on AI to solve problems of ethics, vision, and coordination. This will be done by a new generation of great leaders.

Culture

A future must be stable and environmentally sustainable, however forcing this stability too quickly is itself a cause of instability. Certainly, we can no longer afford private jet travel to climate change conferences, nor a massive zero-sum fight for “social media attention” that many people feel compelled to participate in. A new culture of trust gears toward positive sum games has to arise to enable a new culture of science and communication.

Culture needs to be stable. A culture cannot be based on promoting people for the sake of lies about itself or signaling disconnected from reality or to fulfill goals unrelated to actual human flourishing.

Social mobility needs to be tied to realistic accomplishments in positive-sum games or a high likelihood of them. Some stickiness of family wealth is simply a fact of social organization, but power and status cannot be too sticky.

Tradition and faith will stay. They will survive in the future because they keep pro-family culture alive.

We must remember that we build the future and any tools to accomplish it for humans, for each other and ourselves, and for our children and grandchildren. Humans must come first. Now and forever.