Post-Systematic and Systematic Identity, a Dialogue, Part 1

To optimize or not to optimize, that is the question

Any resemblance of these fictional characters to real people is not a coincidence because nothing is ever really a coincidence.

A systematic identity is a an idea of oneself as a part of a broader ethical system and one's self-evaluation of goodness derives from it. Examples of systematic identities:

a) neoliberal the system you like is the notion of "fair GDP," where you wish for the great benefits of the market to be more evenly distributed

b) libertarian the system you like is freedom from government "coercion" and taxes as to allow freedom

c) effective altruist the system you like is a form of practical utilitarianism in which one hopes to raise the over amount of "utility", as measured by, QALYs, for example.

d) "normal middle class person working in software" also usually implies a certain degree of systematicity of identity. One might not explicitly subscribe to a strong belief structure, but in order to show up to work time and time again, one generally has to believe in some positive vision of technology. As in technological progress and automation of certain tasks being a general net good for society. This frequently also implies belief in the technological system as a important tool of the individual or another system. A tool that they use, not one that uses them. Not everyone shares these thoughts, but a techno-positive vision, even when not specified is a common underlying set of beliefs and cultural assumptions.

Now, all of these have merits in their ability to explain parts of the worlds and guide people into action.

All of these have a certainty with which they approach questions of politics, policy, personal affairs and coordination. All of these have things in common as to the desire to have other individuals in the world realize their "values." They simply have disagreements of what prevents this realization of "values". EAs might think it is global disease or upcoming cataclysmic technology, Libertarians might think that it is government pushing down "the market" and "individuals." Techno-optimists view the absence of technology as a key problem keeping people from fulfilling their full potential. All of these have some sort of single principle or a small set of principles from which to derive the system. Libertarians have the NAP, techno-optimists have the idea that more usage of technology is better, EAs have the idea that more QALYs are better, rationalists have "proper probability updates."

So without further ado, I present a debate between a systematic(S:) vs post-systematic or post-rationalist(P:) identity. I use the terms "post-rationalist" and "post-systematic" interchange-ably here. Post-rationalist is a more established term, however it's usually contrasted with and a reaction to "rationalist" which is a particular systematic identity, while in many way it is also a reaction to all systematic identifies. Other terms that point to the same set of ideas are: "meta-rational," "meta-systematic," etc.

S: I sincerely challenge you to give a purely positive account of "post-rationality" or which makes no mention of concepts which are being denied or criticized. The way that a smart atheist, so challenged for a positive account of what they do believe in, can explain purely positive ideals of probability and decision which make no mention of God.

P: One key insight that has appeared in multiple post-rationalists’ texts is the causal relationship between morality, identity and group conflict. “Systematic” thinkers, craft their identity based on a particular set of principles, be it the desire to be “less wrong” as measured by probability calibration, or a desire to shape the world according to liberty or non-aggression.

They happen to exist in conflict with other group that for some reason can’t seem to follow their obvious and common-sense principles. For rationalists, those groups might be religious people clinging to “faith,” journalists failing to cite proper statistics when reporting on outliers, politicians who “paint a picture” or “persuade” while not adhering to the facts. The rationalists occasionally wonder why the world isn’t rational, hasn’t adopted their insights or doesn’t see the extreme value of knowing the True Law of Thinking.

The post-rationalist insight is that the conflict between the holders of systematic identity and non-holders is not an arbitrary feature of the landscape of the universe, but rather the originator of both groups. By the virtue of an In-group existing, there must be an Out-group and there must have existed an original conflict that gave birth to the division. This conflict could have taken many forms, from ethnic, political, inter-institutional, old money vs new money to one guy simply killed in one-against all sacrifice. Whatever it was, the conflict drove the division and eventually necessitated the creation of labels. Our rational thinkers and Their irrational believers. Vs Our God-fearing people and Their heretic iconoclasts.

The labels were not created out of thin air, rather they generally had to capture some real aspects of the human psyche, common sense reasoning and ethics for both sides. They necessarily have also had to omit some as well.

S: This seems like and interesting historical point, but I fail to see how this historical question has a lot of bearing on my beautiful modern system. Sure, in the past, there were people who called themselves more rational or ethical than others, but they were farther away from the true enlightened understanding of value than we are. Now each system has some disagreements about this ultimate value is based on, such as an abstract notion of freedom, or hedonistic utility, but that's simply a drawback of <other systems> and a vital feature of <my system>. Besides, if the key point of post-rationality is understanding this causal relationship, I don’t see how this fundamentally alters the theory. I should still be able to investigate the history, form proper beliefs about it and make the necessary decisions.

P: If you investigate this idea enough, it challenges every concept, even a meta-level one, such as the importance of beliefs at all, however it’s easiest to see this at work fundamentally challenging the systematic notion of “values,” or as some people say, “one’s utility function.” In the systematic worldview, people somehow spring into the world “full of values” that they implicitly or explicitly carry forth into the world. Perhaps you might have seen some studies about how political attitudes carry across genetically or culturally, but they seem to you of little relevance in terms of the self-reinforcing imperative to optimize the world according to one’s values of “utility function.” Effective Altruists, for example, would not exist as a group in a world where their version of “doing the most good” was as obvious as breathing air. The only reason Effective Altruism can function as a group is due to EA being able to demarcate a boundary not just conceptually, but also tribally. On the other side of this divide there exist people with seemingly diametrically opposed values that also work very hard to change the world to their liking. While they might not call this work “optimizing one’s values,” it will use the same strategies of various levels of effectiveness. You can constantly see arguing on social media, fighting for the limited attention of the elites, writing snarky blog posts, etc., etc.

S: Hold on a second. <My system> already incorporates an ability to resolve value disagreements, thus it is already a "meta-value" system. If only other people could see that their values are actually going to be respected as long as everyone agreed on <my system>. My advocacy for my system is setting up a system for others to optimize theirs.

P: Notably, there are two different "hierarchies" of values that each system allows vs dis-allows. Libertarianism allows people to express their values through money, but not through "violence" of government. However each systematic identity resolves value conflicts by reduction to common legible value currency, which would could be actual currency for example. The issues is that this value reduction was made as tool of value conflict itself and thus cannot be a "meta-value" system. You can thinking that you are setting up other's values to be optimized, but you are still "optimizing your own". A better approach is to recognize that “optimizing one’s values” is an inherently conflictual stance that put two people or groups into unnecessary competition that could be resolved. How to do so in practice is a trickier question.

S: Hold on, this simply sounds the value you happen to hold is something like “peacefulness” or “avoidance of inter-group conflicts, including verbal ones”. This might seem like a fine value, but I don’t see any reason to a-priori elevate this value to a more sacred status over many of the forms of utilitarianism or deontology.

P: The value of “peacefulness” or other related concepts is an ok approximation and an attempt to see a new and more complex paradigm in terms of a simpler one. However, the situation is more complex here. Let’s imagine two hypothetical people – Alice and Bob, who are in a value conflict with each other. Alice wants variable X to go as high as possible. Bob wants X to go as low as possible, however doesn’t care if X is below a certain minimum value (say 0). Bob is stronger and more powerful than Alice.

What would happen in this situation in the systematic worldview is Alice constantly “trying to optimize” X, where they keep putting effort into moving X up. Bob, noticing that X moved from its desired value of 0, would use his power to push it back down. Alice would lament the condition but could justify her actions that “counterfactually” she did something because X could be even lower if she didn’t try to optimize it. What doesn’t seem to come to mind is that “optimization of values” itself that Alice is doing is causing Bob’s reaction.

You can substitute many things for X in this case. X could be “attention devoted to issues outside of people’s locale”, X could be “equality in every dimension between certain groups”, X could be “desire to be left alone by the government.”

S: this still seems like the wrong set of background assumptions on the part of A – if they rationally understood the situation, they would realize that X cannot be moved in that way and either act accordingly. If X is not the only thing they care about, that means looking at thing other than X.

P: Looking at things other than X, rather than continuing hopeless fights is in fact one of the possible desirable outcome. I suspect that at least some part of A does realize what is happening, but that’s not necessarily vital to the point. The point is that the entire optimization “frame of mind” can become counterproductive when faced with this situation. Also, the adversarial nature of “values” is not the exception, it is the rule.

S: This seems similar to Robin Hanson’s idea of “trying to pull the political will sideways to the wedge issues.” So, even if I suppose that the structure of society is such as to make optimization inherently adversarial, I still don’t see the way that changes anything in the theoretical framework. People still optimize their values in the whatever way possible.

P: Once again, the idea of “pulling sideways” is good, but it again slightly under-estimates the required mental model. It’s not that you first understand your values, then you look around and see if there are adversaries and work around them. By the virtue of having verbal “values” at all, you are guaranteed to have adversaries. Think of post-rational insight on values as a government agency’s required giant warning label that comes with every “identity,” or “value” – this is bound to cause arbitrarily complex conflict if you push it hard enough. This is not still, a fully general argument for nihilism, there is still right and wrong action at the end of the day and understanding value-based reasoning is still a good tool. “Keep your identity small.” is a good warning label. However, the idea is to have a good causal model of how **identity comes into being** in order to understand the reasoning behind such a suggestion.

S: If situations are more common than others, that is still within the realm of <my system>. Also, the historical origination of identity does not matter, since values “screen off” the causal process that has created them. It doesn’t really matter for my action if I am a robot created by one party to fight another. Whatever I believe my imperative to be, I will be doing. I could be wrong or deluded about my true values, which is a separate error, but if there is alignment and understanding, the historical facts of culture and evolution do not matter.

P: In a pure isolated universe, where you are the only rational being, it doesn’t matter. However, it matters when you realize you have adversaries, who might in some ways not be too different from you. Perhaps running a similar algorithm and copying each other’s object-level tactics to use against each other, all while claiming to be different due to diverging values. The situation changes then, as you can achieve a better overall outcome if you learn to cooperate or “non-optimize.”

S: Ah, so of course, the situations changes if you suddenly encounter agents running algorithms like your own. In a sense, you can study higher level decision theory and act as if you are a model for all those who are similar. Is post-rationality just repackaging those?

P: In terms of mapping decision theories to Kegan levels, I would put Evidential Decision theory as kegan3. (I copy successful people’s actions because the actions are correlated with success). Causal Decision Theory is ~ kegan4 (I limit myself to those actions where I have a good model of them causing success). Properly mastering functional decision theory is kegan 5 / post-rationality (I act such that I am a proper model to those who are similar or wish to imitate me), which includes a true and hard to acquire information which people algorithms are identical to which one’s of one’s own. (for more discussion on Kegan see here: [Developing ethical, social, and cognitive competence](https://vividness.live/2015/10/12/developing-ethical-social-and-cognitive-competence/).

S: Are those the mathematical foundation of post-rationality, or is there more?

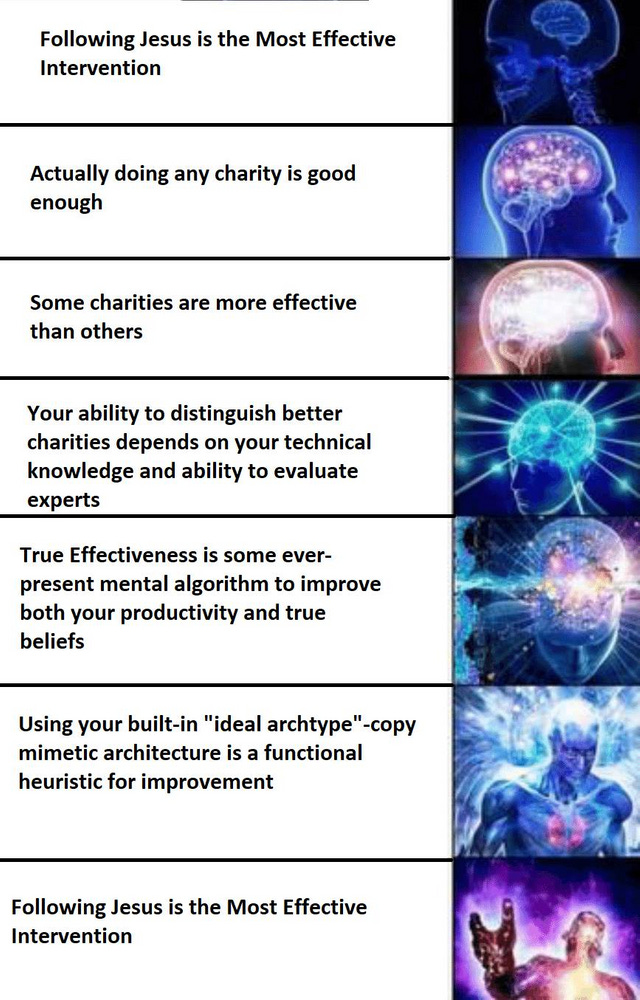

P: The primary disagreement about the mathematical foundations is that of using math prescriptively vs descriptively. The questions being “here is how the proper beliefs updates should work” or "here is how a rational economic agent should behave" vs “how is any of this brain stuff working in the first place?”. The systematic view being – this person’s actions do not look like a proper “belief update” / "action corresponding to my system" – therefore it’s wrong! The post-rationalist view is first asking the question of what mathematical model does fit this person’s behavior, or which particular social patterns and protocols enable and encourage this behavior. The person could still be wrong, but the causal structures of wrongness are worth identifying. More importantly, “rightness” should be a plausibly executable program in the human brain, thus “rightness of human action and belief algorithms” could be approximated and approached better with “do what this ideal human” is doing, rather than try to “approximate” this theoretical framework. Humans do not have that great of a time with commands such as “approximate this math,” compared to approximate this guy. Not that “approximate this math" isn’t a useful skill per say.